L@rge (Languag¬)^Models

You made a box. You’re quite happy with it - as is.

But you need to fill it with dirt to pot some plants or find a divorce attorney. The dirt is at Home Depot in bags. Probably in the garden section but maybe if you are lucky the bags are just out front so you don’t have to walk through the garden section.

Now, you don’t want to buy too much dirt because then your wife may want you to build another flower box.

This would be equate to work. Which is to be avoided at all cost.

How do you determine how many bags of dirt you need?

A protohuman would carry the box to Home Depot put it by the bags of dirt and point from the bag of dirt to the box and grunt “Urgha?” Which loosely translates to “How much dirt..?”

The outstretched arm is acting a vector. More on this in a second.

So “Urgha” + gesturing like a wind vain => “How much dirt do I need to fill that box up?”

That is a language transform. From a paralinguistic language to American English.

Our brains are well suited to this sort of transformative analysis.

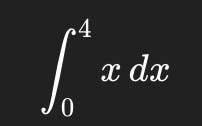

Since, I can’t type out a box I am going to convert this to two dimensions. Which is a fancy way of saying “will cause paper cuts” but not splinters.

Here is our box in two dimensions. And the green shaded area is where we want to put dirt. Don’t ask why it’s at an angle. Best to not ask such questions in regards to decorative plant hangars.

Written in another way: Laske käyrän y = x alapuolinen pinta-ala välillä x = 0–4.

Or, if you don’t know Finnish:

Or in symbol Python

result = (4**2) / 2

print(result)Or in more formulaic Python

import sympy as sp

x = sp.symbols('x')

integral = sp.integrate(x, (x, 0, 4))

print(integral)Or, if you don’t know the symbology of mathematics, Finnish or the Python programming language: How many blocks does the green shaded area cover?

Or if you already figured it out: 8.

All of these written statements no matter how foreign they appear to you are saying the exact same thing. If you take your finger as an exercise (do this) and point with your finger from the Python code to number “8” you become an LLM. At least you have become a vector in an LLM.

I want to be very clear.

That graphical image is the same as the number 8 which is the same as that Finnish gobbledygook. We can describe reality in a multitude of ways. But, our descriptions are still not reality. 8 is not the box needing to be filled with dirt.

All that LLMs do is have lots and lots of fingers that point from one representation of information to another. And in the case of LLMs where each finger points is probabilistic. If you were to cut out each language representation above of “How much dirt…” and waggled your finger around with a blind fold on, when you stopped waggling, your finger would point at one of them. But, which one is probabilistic.

LLMs just are transposing one presentation of information in one language to another. It appears miraculous at times. But, that is only because you don’t know everything. For example, you probably didn’t know Finnish. That doesn’t mean anything magical happened.

What is important to understand is that this translation or transposition of existing information does not create new information. LLMS are not inventing new information. Recently, there have been numerous news stories about LLMs solving mathematical proofs that hadn’t been solved before.

Only for the researchers to find out after publishing the result that the problems had been solved before. It was just no human cared enough to update the website where these solutions were being catalogued. The LLM pointed out the researchers ignorance. It didn’t expand the corpus of human knowledge. Just the corpus of the researchers knowledge.

This reminds me of the movie Jerry Maguire when Jonathan Lipnicki’s character says “did you know the human head weighs eight pounds?” How many people in the audience didn’t know that? Did you know it’s actually false? The average human head weighs 11 lbs.

The hope of the AI community (which is really a delusion) is that LLM-based AI will be able to solve unknown problems of physics, chemistry, medicine and biology. This is based on the belief that if a person can think up ten ideas in an hour and an AI can think up 10 trillion ideas in an hour that AI can speed up the research process essentially allowing generations of human thought to be condensed into hours or days.

But, that isn’t how progress in science works. Progress works from failure. Einstein had a happy thought - once.

Almost every other discovery was because a person stubbed their foot on a rock, picked it up to throw it and afterwards their hand tingled strangely and then a week later fell off and we discovered radiation.

LLMs are not capable of either task.Though they will confidently tell you to pick up more rocks which will waste a lot of human time. And testing 10 trillion new ideas takes just as long whether or not humans think them up or an LLM spits them out.

Just like spending money to go Mars (an environment we can not live), rediscovering tunnels (the hyperloop,) repeating the failures of previous generations in regards to fusion, we are wasting massive amounts of time on (and with) LLMs.

Money isn’t real.

Time is.

I am not saying chat bots don’t have their use and that LLMs are great (if unfortunately very dishonest and over confident) librarians but LLMs aren’t a young Einstein. And they don’t have feet to kick rocks either.

-AJ